We would use the VS Code editor to write our python scripts.You would see the DAGs in the snapshot below. Open the web browser and go to localhost:8250 and log in. Ssh -p 2222 Install Python and Airflow on a Virtual Linux machineįollowing that, install the Airflow by the following command:Īirflow users create -u admin - f first_name - l last_name> - role Admin - e your_emailĪfter this step, our Apache webserver is now running. In the windows machine, open the terminal and make a connection by typing

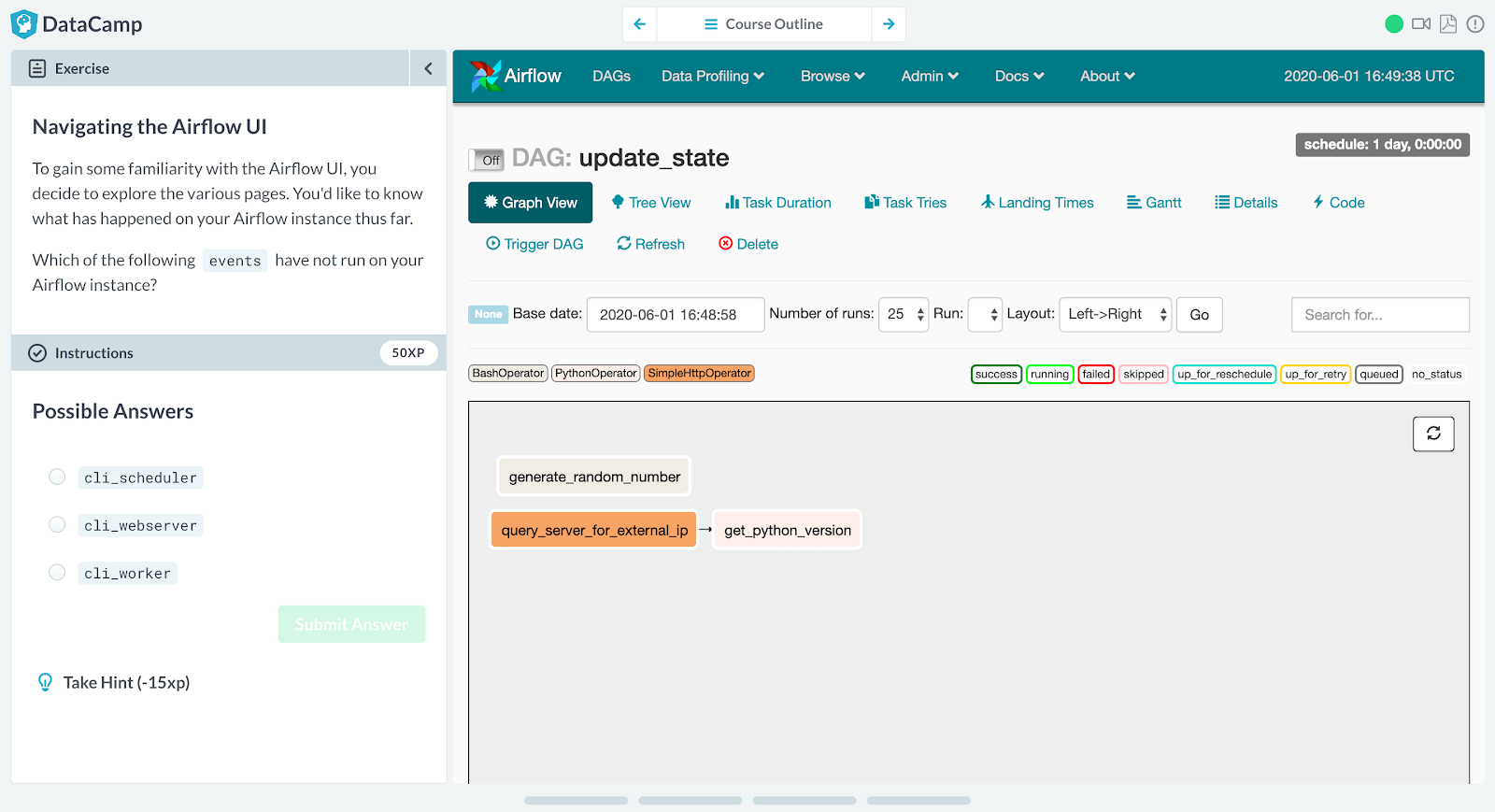

‘sudo adduser airflow’ and type in the password Now, create a user, here it is “airflow”.The installation window will look something similar to this:.Run the virtual machine and install the OS.Guest 8080 to Host 8250 will be used for Airflow UI In this, add the two ports as shown below: Under Advanced, click on the Port Forwarding button: Now let us configure the network, so go to Settings for the created machine and go to Network.Once you have completed the setup, you will see the virtual machine in this manner:.Write the name of your Ubuntu server and click on next in the next few steps to complete the setup.Open the Oracle VM Virtual box manager and click on the ‘new’ button.Also, download the Ubuntu Server ISO file before moving forward. Install the Virtual Box VM to run the Ubuntu Server.Setting up the Airflow and VS code editor Download the dummy cat details data from an API.Setting up the Airflow and VS code editor.Things we will do to create our first Airflow ETL pipeline in this blog: Frequently used to handle big data processing pipelinesĭAG for airflow Airflow employs a workflow as a Directed Acyclic Graph (DAG) in which multiple tasks can be executed independently.A sequence of tasks that are started or scheduled or triggered by an event.In this blog, we will show how to configure airflow on our machine as well as write a Python script for extracting, transforming, and loading (ETL) data and running the data pipeline that we have built. Python is used to write Airflow, and Python scripts are used to create workflows. Apache Airflow is an open-source workflow management platform for authoring, scheduling, and monitoring workflows or data pipelines programmatically.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed